- It is easy to see that

Machine Learning is something that takes place in a world right next to the

Jungles of AY-TEE. Models are apparently something you find in the

Hidden Temple

of accurate models.

No wonder, that it is not easy to get it all right.

A great talk!

Conference.

Thursday 19th, 2017.

Panel discussion.

Advisory board panel - from Data first to AI first.

by

Anders Arpteg.

Talked about the

big trends:

From Artificial Intelligence in the 1950s to 1980s.

Followed by Machine Learning in the 1980s until now.

And moving forward with Deep Learning, from the 2010s and onwards.

Where

Deep Learning is beginning to flourish.

As

- Large datasets of labelled training data, like

image net, begin to become available for everyone.

- Computer power, GPUs, has again increased tremendously in recent years.

- AI libraries, like

TensorFlow,

are now available for everyone.

- And (AI) algorithms, such as deep learning reinforcement, has again improved.

According to Kevin Kelly it is also pretty easy to see where this is all going:

The business plan of the next 10.000 start-ups are easy to forecast...

Take X and add AI...

Indeed, many businesses, such as Google, are now transitioning from a

Mobile First to an

AI first business plan.

The problems are also pretty easy to see:

- Lack of labelled data.

- (Problems with) Model transparency and troubleshooting

(If you have a model with 2000 co-dependent variables how could that be explained to humans?).

- Massive software engineering overhead.

- Lack of knowledge/experienced talent.

- Privacy and safety issues.

etc.

Indeed, what are the full consequences for business models, organizations, and cultures, as we move towards an

AI first world?

Sure, AI should be all about empowering humans. So, that more people can do more complicated tasks.

But there are of course huge consequences.

- Currently, in the US, the most common job is being a truck driver.

Hardly something we will have in an ''

AI first'' world (with self-driving cars) !??

What does it even mean to live in an

''AI first'' world?

Certainly, as soon as we understand AI, we stop calling it AI, then we consider it un-intelligent...?

But as long as we don't understand it, we are a little bit afraid of it. And think that its ultimate

optimization goal must the same as ours, to survive....But that is of course not necessarily true ...

A great panel discussion!

Talk.

Are you a Yoda, a young Luke Skywalker or a StormTrooper?

by

Ingo Paas, Apotek Hjaertet.

Talked about key skills in Silicon Valley, and elsewhere:

Key skills: Passion, focus, engagement, risk taking, value creators, in-depth industry experience, have a track record of failure...!

And then of course, whether we were

''Troopers'' (The majority of people in a company),

or

''Luke Skywalkers'' (not quite there yet), trying to learn from the

''Yodas'' (which there are plenty of in the Valley).

And there is of course always a lot out there to learn, with opportunities for

many new businesses.

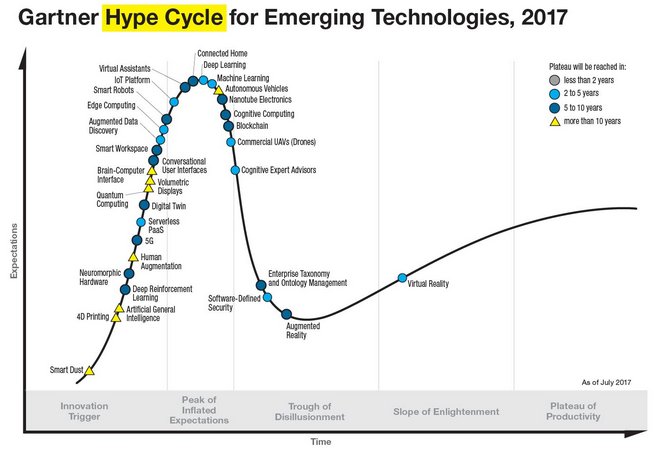

Todays, 2017, catchphrases and popular words, things like:

Mobility, Virtual Reality, Augmented Reality, Bots, Blockchain,

IOT, Cloud, Big Data, Machine Learning and AI

- Are, of course, very exciting, and the starting place for businesses for years to come. But even these things will, of course,

eventually give way to new ideas. And being positioned for that is what

''the game'' is

all about.

Making it a reality has to do with:

Thinking Big, starting small.

It also allow us to go after the interesting projects (instead of the boring ones).

I.e.

Most projects are boring, because we have done them before.

Actually,

innovation and

risk is not an option anymore in todays world.

This is something we all have to do!

Take away:

- Promote curiosity.

- Solve real problems.

- Unlearn and learn.

Talk.

Data empowerment through user-centric design.

by

Werner Kruger, Klarna.

Talked about machine learning for all.

Logically, this has to do with adding real value to businesses through ML:

I.e.

- Identify opportunities to use ML.

- The ability to execute on those opportunities.

- Have a competitive advantage (higher quality ML solutions than your competitors).

Talk.

The paradigm shift of the enterprise R&D.

by

Jesse Chao, Ericsson AB.

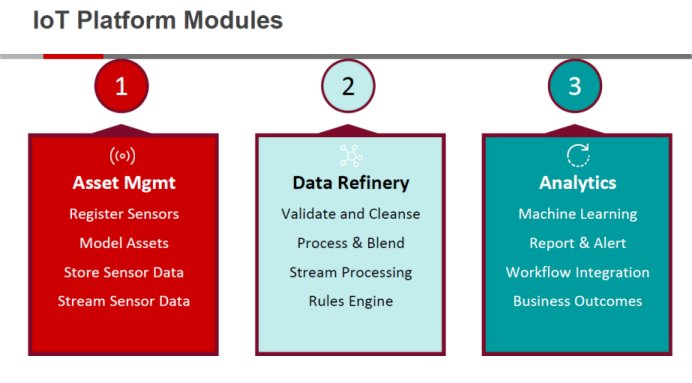

Started by giving a short introduction to the need for the coming

5G network:

- Broadband experience everywhere.

- Smart vehicles, transport and infrastructure.

- Critical control of remote devices. Robots.

- Interactions. Humans - IOT.

With speeds up to 5-10 GBit/s, 5G will be about 100 times as fast as the current 4G network.

With Stockholm and Tallinn among the first movers in 2020.

Controlling all of this data, will obviously take a lot of Data Scientists...

And being a Data Scientist, is, of course, the SEXIEST job you can have in the 21th century.

Right there in the intersection of mathematics, statistics, computer science, domain experience and business acumen...